ASIA ELECTRONICS INDUSTRYYOUR WINDOW TO SMART MANUFACTURING

NVIDIA Grace Superchips Power Taiwan-Made Servers

At COMPUTEX Taipei 2022, NVIDIA announced that Taiwan’s leading computer makers are set to release the first wave of systems powered by the NVIDIA Grace™ CPU Superchip and Grace Hopper Superchip for a wide range of workloads. These span digital twins, artificial intelligence (AI), high-performance computing, cloud graphics, and gaming.

Dozens of server models from ASUS, Foxconn Industrial Internet, GIGABYTE, QCT, Supermicro, and Wiwynn are expected starting in the first half of 2023. The Grace-powered systems will join x86 and other Arm-based servers to offer customers a broad range of choice for achieving high performance and efficiency in their data centers.

“A new type of data center is emerging — AI factories that process and refine mountains of data to produce intelligence; and NVIDIA is working closely with our Taiwan partners to build the systems that enable this transformation,” said Ian Buck, vice president of Hyperscale and HPC at NVIDIA. “These new systems from our partners, powered by our Grace Superchips, will bring the power of accelerated computing to new markets and industries globally.”

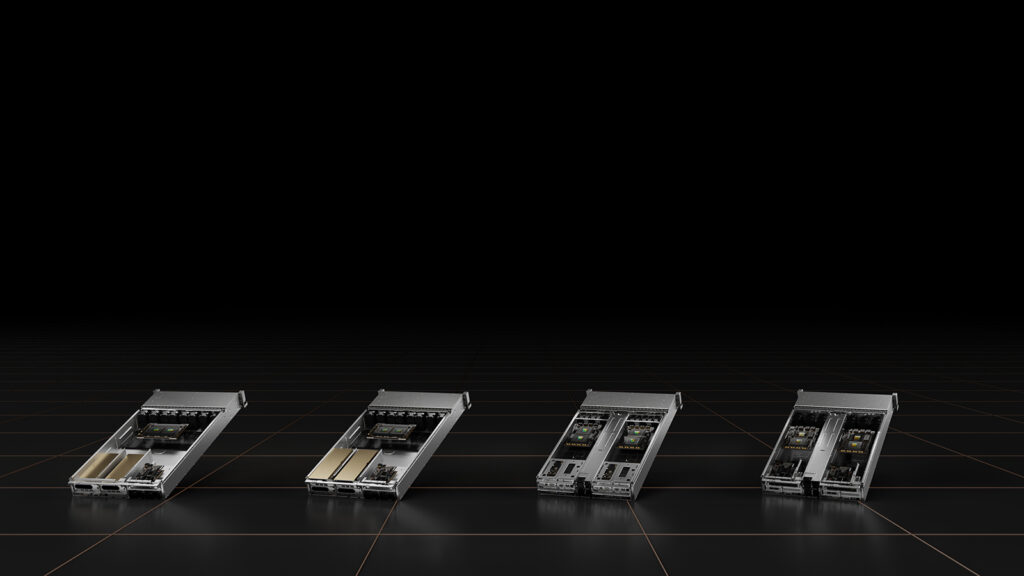

The coming servers are based on four new system designs. They feature the Grace CPU Superchip and Grace Hopper Superchip, which NVIDIA announced at its two most recent GTC conferences. The 2U form factor designs provide the blueprints and server baseboards for original design manufacturers (ODMs) and original equipment manufacturers (OEMs) to quickly bring to market systems for the NVIDIA CGX™ cloud gaming, NVIDIA OVX™ digital twin, and the NVIDIA HGX™ AI and HPC platforms.

Supercharging Modern Workloads

The two NVIDIA Grace Superchip technologies enable a broad range of compute-intensive workloads across a multitude of system architectures.

The Grace CPU Superchip features two CPU chips. They are connected coherently through an NVIDIA NVLink®-C2C interconnect, with up to 144 high-performance Arm V9 cores with scalable vector extensions and a 1TB/sec memory subsystem. The breakthrough design provides the highest performance and twice the memory bandwidth and energy efficiency of today’s leading server processors. It aims to address the most demanding HPC, data analytics, digital twin, cloud gaming and hyperscale computing applications.

The Grace Hopper Superchip pairs an NVIDIA Hopper™ GPU with a Grace CPU over NVLink-C2C in an integrated module designed to address HPC and giant-scale AI applications. Using the NVLink-C2C interconnect, the Grace CPU transfers data to the Hopper GPU 15× faster than traditional CPUs.

Broad Grace Server Portfolio for AI, HPC, Digital Twins and Cloud Gaming

The Grace CPU Superchip and Grace Hopper Superchip server design portfolio includes systems available in single baseboards. It has one-, two- and four-way configurations available across four workload-specific designs that can be customized by server manufacturers according to customer needs.

The NVIDIA HGX Grace Hopper systems for AI training, inference and HPC are available with the Grace Hopper Superchip and NVIDIA BlueField®-3 DPUs. Meanwhile, NVIDIA HGX Grace systems for HPC and supercomputing feature the CPU-only design with Grace CPU Superchip and BlueField-3. Also, the NVIDIA OVX systems for digital twins and collaboration workloads feature the Grace CPU Superchip, BlueField-3 and NVIDIA GPUs. Lastly, NVIDIA CGX systems for cloud graphics and gaming feature the Grace CPU Superchip, BlueField-3 and NVIDIA A16 GPUs.

NVIDIA is extending its NVIDIA-Certified Systems™ program to servers using the NVIDIA Grace CPU Superchip and Grace Hopper Superchip, in addition to x86 CPUs. The first certifications of OEM servers are expected soon after partner systems ship.

Supported Software

The Grace server portfolio is optimized for NVIDIA’s rich computing software stacks, including NVIDIA HPC, NVIDIA AI, Omniverse™ and NVIDIA RTX™.