ASIA ELECTRONICS INDUSTRYYOUR WINDOW TO SMART MANUFACTURING

Latest Edge-AI Vision-on-Chip Gears up for High-Level Robot Autonomy

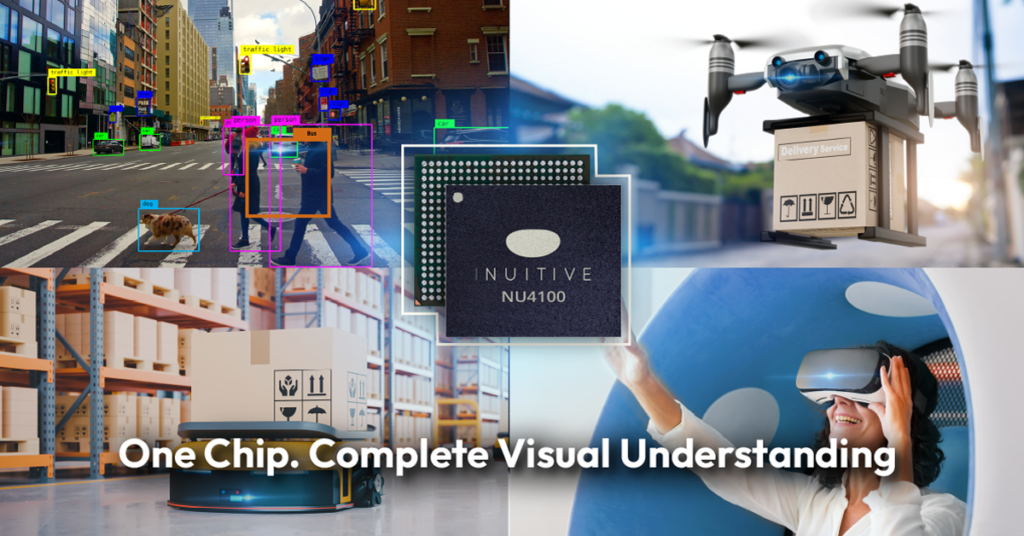

Israel-based Inuitive Ltd., a vision-on-chip processors company, has launched NU4100, a new addition to its vision and AI IC portfolio. The new NU4100 IC was based on Inuitive’s unique architecture and advanced 12nm process technology. It supports integrated dual-channel 4K ISP, enhanced AI processing, and depth sensing in a single-chip, and low-power design. These features set the new industry standard for edge-AI performance.

Ideal for Robots

NU4100 is the second generation of the NU4x00 series of products. Specifically, the NU4x00 series is ideal for robotics, drones, virtual reality (VR), and edge-AI applications that demand multiple sensor aggregation, processing, packing, and streaming. It is particularly designed for robots and other applications that must sense and analyze the environment using 3, 6, or more cameras. Primarily, they make real-time actionable decisions based on that input.

“Robots designers demand higher resolutions, an ever-increasing number of channels, and high-performing, enhanced AI and VSLAM capabilities,” said Shlomo Gadot, Inuitive’s CEO. “The NU4100 addition to the Vision-on-Chip series of processors is a true revolution, based on all integrated vision capabilities, combined in a single, complete-mission computer chip. The integrated dual-camera ISP provides much-needed flexibility without having to add more components, which, in turn, require additional processing power at a higher price point.”

Gadot also said, “Inuitive is committed to bringing the most advanced technology to the market. NU4500, the next processor in our roadmap, is planned for tape-out on Q1 2023 with additional 8 cores of ARM A55, more than double the AI compute power. It has H.265 & H.264 video encoder & decoder and is set to be the ultimate single-chip solution for robotic and applications.”

Supports Multi-camera Design

In particular, the new NU4100 supports multi-camera designs. It can simultaneously process and stream two imager channels of up to 12MP, or 4K resolution, each at 60fps, while running advanced AI networks. This IC enhances the level of integration for products using Inuitive technology. It speeds the AI processing power by two to four times while consuming 20 percent less power than Inuitive’s first generation.

This latest chip was quickly adopted by the CE & Metaverse industry leaders, already securing it for their market products, instead of any alternatives. Customer products powered by NU4100 will be available starting 1Q 2023.

“Robots are increasingly reliant on vision processors. Their ability to perceive and understand the environment is fundamental to achieving a higher level of robot autonomy,” said Dor Zepeniuk, CTO and VP of Product, Inuitive. “Processing streams of input from multiple cameras expand the robot’s independence and flexibility, while the integrated dual-channel 4K ISP improves the system’s capabilities. Both, in turn, serve the end goal of designing powerful products that are lower on cost.”

Main Features, Capabilities of New NU4100

The new NU4100 features a proprietary inuitive depth vision accelerators (IDVA). It highlights a high-throughput, low-latency, depth-from-stereo HW engine and integrates SLAM HW accelerators. It has general-purpose imaging/vision engines, dual camera ISP unit – up to 12Mp per video stream, and dual-core vision-DSP with 384GOPs – optimized for computer vision functions. Moreover, it has an efficient AI engine with 3.2TOPs processing power for DNN and ARM Cortex-A5 CPU running Linux OS. It offers connectivity for up to six camera devices, and is built in with fast interface functions, including USB3.0, MIPI CSI/DSI, and with Rx & Tx, LPDDR4 and more.

Applications

The new high-resolution and advanced AI processing provided by the new IC can benefit many other edge-AI applications. Applications, such as Industry 4.0 facilities can leverage the high edge-AI performance and image resolution for improved process control and a higher level of automation. Likewise, drones can use the ISP and Neural Network-Based vision effects, such as low-light enhancement, to autonomously operate in both dark and lit environments.